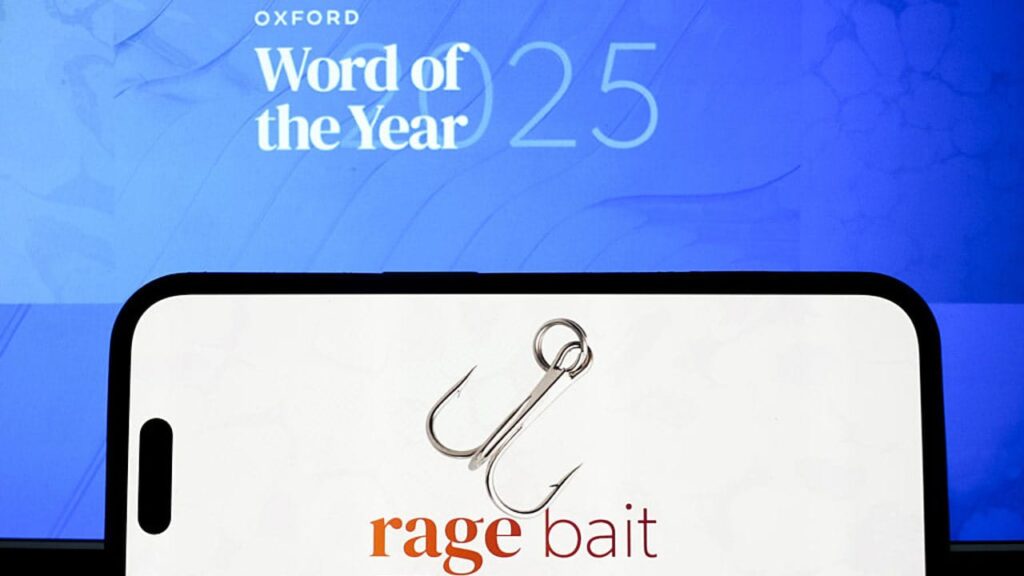

The Oxford University Press is shining a light on the more toxic side of internet culture by choosing “rage-bait” as its 2025 Word of the Year. Oxford’s language experts define it as online content deliberately designed to elicit anger or outrage by being frustrating, provocative, or offensive, in order to increase traffic or engagement on a webpage or social media platform.

To boost engagement, content creators often use dramatic songs, exaggerated language, or intensified wording to describe situations. They add their own amplified interpretations or solutions, making minor mishaps appear far more serious than they actually are. Even acts committed without a guilty mind are interpreted as intentional harm. Engagement increases because such content touches sensitive themes such as religion, identity, history, and culture. Any perceived harm to religious beliefs, historical literature, or architecture is often treated as intolerable by audiences.

In a world increasingly polarised by religion, gender, sexual identity, historical narratives, and ideological differences, people tend to regard their own beliefs as superior to those of others. Despite attempts to live in harmony amid these differences, rage-bait content fuels anger and creates pathways to conflict. Where efforts at peace-building are rewarded, such content deepens hostility. Where negotiation seeks to bridge divides, rage-bait hardens positions and reinforces antagonism.

However, content creators alone do not bear full responsibility. Algorithms play a crucial role in amplifying division. If a person holds belief A against belief B, the algorithm repeatedly recommends content supporting A and opposing B, keeping the user engaged. Over time, users are fed selective arguments, historical events, and narratives that strengthen their existing beliefs. It is not a single post that creates division, but a continuous stream of similar content that makes situations appear increasingly grave.

Therefore, the solution lies in regulating both content and algorithms. While freedom of speech must be protected, it is equally important to ensure that hateful and provocative material does not dominate public discourse. Algorithms should be designed to present multiple perspectives rather than reinforcing echo chambers.

In this context, the Draft Broadcasting Services Regulation Bill, 2023 becomes significant. If implemented carefully, it has the potential to balance freedom of speech with social harmony and the integrity of the nation.

Discover more from newscape.in

Subscribe to get the latest posts sent to your email.